By JW Insights

One of the bottlenecks that the industry is most curious about and wants to break through the most is to crack how TSMC used amazing speed to improve its yield from technology development to seamless mass production and further widen its gap with its competitors. In terms of highly educated talents and the technology resources of the U.S., Intel is far superior to TSMC, so how come it cannot catch up with TSMC when it comes to the race of mass production technology of semiconductors? When you get to the bottom of it, it is because of the continual improvement during mass production by Taiwan’s information and communication industries and the unique ZD zero-defect quality management system that took them over forty years of exploration to build. Now, let’s share this complex and dynamic operation technology that can reduce errors.

As consumer electronic products become personalized, the requirement of lightweight and compact craftsmanship technology is brought; a component has to go through hundreds of processes. For example, the front-end chip process of semiconductors, or the integration of dozens of thousands or even over a million same components together, such as Mini/micro LED, or advanced precision packaging and other production processes that could not be reworked. The accumulation of slight defect rates of each process, such as 0.1% in each process, will cause approximately 50% of yield loss after 500 processes, and this will cause mass production to be out of reach. To make 20 million micro LED chips with only 0.1ppm defect rate into a panel, the first pass yield is 0; each panel will have two damaged chips that need to be repaired. Therefore, how to apply the zero-defect quality system that was only affordable by high-margin products in the past to popular consumer products, increase the barrier for entering the industry, and shake off the imitation and catch-up by developing countries, is a task that Taiwan operators have been continually focusing on and improving.

The biggest external problems faced by the quality of Taiwan’s manufacturing industry at the moment include a shortage in manpower, difficulty in requiring operation quality, and difficulty in communication due to the language of foreign workers, which can easily cause errors during training. Under such circumstances, it is becoming more and more difficult to achieve ZD! We will explain and discuss two main aspects below, designing the system and shaping the management culture.

1. The design of the quality management system

The design concepts of the ZD quality system are as follows:

1. Production with no output, inspection with no outflow

2. First no outflow, then no output

3. Establish EAR early warning mechanism

4. Radically improving HWCQI with OCAP

The quality concept in the past was quality derived from inspections, then improved to being from production and design; the best is to be exempted from inspection. However, it is impossible for requirements such as zero-defect to be achieved by production alone; a final inspection must be added as insurance in order to minimize the risks. Therefore, the quality concept has to be changed, not to start mass production only after ensuring zero defect, but to rely on the operation of a quality system that can “capture both ends”; no output in the front-end and no outflow in the back-end.

First of all, when evaluating the Process Failure Mode and Effects Analysis PFMEA (Note 1) risk factor, the no outflow to the customer should be considered as the score for failure effect and not production yield. In other words, the scores of the failure modes that will flow to the customer are all close to eight to ten points, and the score for the no output part will be lower; the no outflow failure mode should be the main consideration of design in the early stages. In order to have no outflow, inspection instruments must be added at the back-end for 100% full inspections, and the more automated the inspection instruments are, the lower the risks; when the inspection instruments are automated, and there is a 100% full inspection, the score of the detection difficulty can be instantly reduced to one. This means that the confidence of no outflow can be very high. Under this premise, we must conduct another PFMEA for the failure of the back-end automated inspection machines; this is second insurance and is very crucial. Many people think that if a full automated inspection is used for the back-end, then there are no worries, but this is wrong; even automated full inspection machines could have errors. Therefore, risk prevention must also be performed for automated full inspection machines. Think of the possible false positives for the inspections in advance; there are two types of false positives when it comes to full automated inspections, one is called overkill, and the other is called an escape. During the early stages of zero-defect, machines must be calibrated to overkill rather than escape; only in this way can they win customers’ confidence and create market needs. After the overall quality standard of the product and the suitability of the customers are slowly understood, can the overkill part slowly be loosened appropriately from the aspect of improving yield? After the back-end no outflow quality system is created, we can start to casually go back and rectify the no output part without the pressure of customer complaints, improving while mass producing, increasing yield, and reducing cost.

No outflow is the parameter Y that captures the final measurement of the finished product inspected. No output, on the other hand, must be grasped at the front of the parameter. In the past, due to cost considerations, quality control plans were based on the featured parameter y of IPQC semi-finished products. As the Internet of Things (IoT) becomes cheaper and more popular, the key parameter x of equipment can be controlled directly from the source, and therefore try to measure as few semi-finished products as possible. Hardware equipment key parameters (HWCQI) are measured through IoT connections directly.

The correlation between the finished product Y, semi-finished product y, and equipment x is:

Y=f(y1,y2,...)

y1=f(x1,x2...)

The single parameter closer to the source, the less likely it will be affected by the interactions between more factors. It is easier to control directly in order to avoid anomalies from occurring.

And how can no output be achieved? The following is the analytical explanation.

(Note 1) A risk scoring method to prevent quality failure is multiplying seriousness by occurrence by detection; the higher the score, the higher the risk, and the higher the priority to establish quality monitoring methods.

Growing EAR

In the previous chapter, we discussed the no outflow part; now, we’ll talk about how to have no output because no outflow only wins customers’ trust, but it does not mean it can make profits. Next, we must try to allow the yield of each station to reach 100% and reduce the loss from defective products as much as possible during the production process; only by doing so can we start marching towards the profiting stage. But how do we create the no output quality system in the production line? The first is to establish the early alarm response feedback mechanism EAR. The abbreviation of Early Alarm Response is EAR, which is very vivid; when we hear something, we must be aware of the occurrence of problems. In other words, when the quality feature exceeds the control limit (not out of specification yet) at certain stations during our production, an alarm has to be issued immediately to remind the personnel responsible for that station to perform correction processing in real time. This is something that workers and corporate management cultures that only emphasize creativity and personal freedom cannot adapt to. In order to achieve success in the ZD manufacturing industry, the working attitude must be changed.

The first step is to establish SPC control for the process parameters at each station. SPC is for controlling all the parameter features that can be measured in numbers. For example, when we want to coat a layer of film, the thickness of the film or the depth and size to cut; the upper and lower control limits for the data of these measurements must be calculated using statistical tools, and use this control limit to monitor quality throughout the entire process and check whether it is off-center. The second is a type of non-digital data; it is an anomaly in the appearance of graphics or particle contamination. In earlier periods, these non-digital appearance anomalies relied on manual inspections with microscopes; however, as the shortage of manpower becomes more and more severe and unstable, automated optical measurements are now gradually introduced, called AOI. These automated tools can reduce the use of manpower and the instability of personnel significantly. The dimensions of high-tech precision products are getting finer and finer; the normal ones are already at the micro level, and the more high-end ones, such as the processes of semiconductors, are even up to nano levels. Therefore, dropping any tiny particle on the graphic will cause short-circuit between circuits, which is why monitoring these particles is crucial. In addition, the complexity of processes, sometimes hundreds or even thousands of processes are assembled into finished products; therefore, how to reduce particle drops on each level is an extremely important system.

After establishing each SPC and the IPQC of AOI, finally, there is another essential monitoring system related to reliability called ORT (ongoing reliability test), which is also indispensable. Sometimes, some problems on the production line might not affect the functions, but when the product reaches the customer, they have been through long periods of changes in temperature and humidity. The functions failed after using the product for a period of time and caused customer complaints about poor reliability; the losses from this type of customer complaint are huge. This type of function failure usually does not come from some measurable data, nor can it be observed from microscopes; sometimes, it might be from the deterioration of certain chemical materials with time. For example, changes in the composition of film materials, or some may have been caused by contamination of residual chemical substances or even deterioration in the materials from the suppliers, which we are unable to notice immediately. In addition, since it is impossible to spend a high cost to do inventory station by station, therefore, a reliability sampling test system must be established to sample the produced product and give them extreme high-temperature, high-humidity, and high-current stress tests to predict the usage life of the parent product and whether its reliability qualifies, to prevent products that seem to function normally on the surface from failing after being sold to the user-end and used for a period of time and need to be recalled. The cost from such losses is actually quite huge. That’s why ORT is another necessary thing for the ZD quality system; it is necessary to monitor changes in quality reliability that cannot be seen in digital SPC and appearance quality control.

Its function is to usually design sampling a certain ratio of products for monitoring. Also, when there are slight changes in a certain process, and there is the need to determine whether these products can be accepted, ORT must also be performed additionally to ensure there are no reliability issues first before they can be passed safely.

Opening EYES

What we will be talking about next is that when the EAR hears abnormal noises, the processing method of the factory is the so-called OCAP - out-of-control action plan. When there are anomalies with the processes that have exceeded our control range, action plans must be taken. However, does this have to be activated for every large and small anomaly? Some anomalies are pretty common, and their causes can be identified using cause and effect diagrams (fishbone diagrams); also, if a batch of operators who are familiar with how to handle anomalies were already trained for the production line, they can be authorized to handle the anomalies according to their standard OCAP procedures. However, some anomalies are new ones that were never seen before, and this is when action plans must be activated; experts of each process and equipment are gathered to analyze and discuss handling countermeasures. What kind of anomalies need to activate action plans, and what kind of anomalies do not need to activate action plans? This is not determined by the severity of the anomaly; instead, it is determined according to three indexes. The first one is an unknown cause, the second is unable to determine the scope of the risk, and the third is causing reliability issues. If any of these three conditions is met, OCAP must be activated and have a consultation with expert systems. Firstly, expand horizontally to the same equipment, filter the upstream and downstream processes vertically, and identify all abnormal products so they will not outflow; secondly, identify the anomaly’s cause and implement the necessary countermeasures so that they will not be outputted again.

The analysis and solution often used in the industry for this type of anomaly is a method called 8D (Note 2), and the most crucial step in this method is step D2, which is problem description. The best tabular method to describe problems is through plane charts (Note 3). Because if the problem is not defined clearly, the following cause analysis will also deviate; this is a fundamental concept. This is just like when the police are trying to solve a murder case; they must first confirm whether the murder was for love, hatred, or wealth before they can monitor the related suspects. The direction is most important; if the direction is wrong, there is no way the true cause can be identified. This is why we often say we picked the wrong fish; we’re desperately trying to analyze its fishbone diagram, but we couldn’t find the true cause of the fishbone. Therefore, the use of D2 is to compare with the data of existing anomalies to help us determine which fish it is. After we confirmed which fish it was using plane charts, the true cause will be trapped on that fish.

8D (Note 2)

8D method

D1 Form the team

D2 Describe the problem

D3 Contain the problem

D4 Identify the root cause

D5 Formulate and verify corrective actions

D6 Correct the problem and confirm the effects

D7 Prevent the problem

D8 Congratulate the team

Plane chart (Note 3)

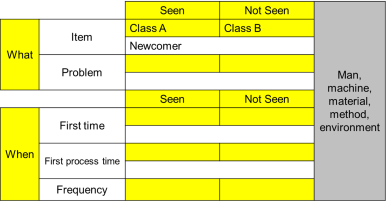

A quick tabular method used to train engineers on how to analyze and solve problems

Compare the control group information of seen and unseen problems to pick the differential factors of man, machine, material, method, and environment quickly and directly. Because the chart is shaped like a plane, it is commonly known as a plane chart.

For example: Paint peeled after the computer chassis was painted; if this problem was to be analyzed using a fishbone diagram, there might be many possible reasons that need to be checked one by one, which will take a lot of time. If from the report analysis it can be seen that the ratio of class A is much higher than class B, the difference between the man, machine, material, method, and environment of the two classes can be compared to identify the important factor that class A used large batches of newcomers recently.

As artificial intelligence information technology becomes more and more advanced and cheaper, high-tech companies that need speed will use the aid of the EYES (Electronic Yield Enhancement System) system with problem analysis, which is opening the eyes and staring. This system includes two parts:

● One is the artificial intelligence EDA engineering data analysis; it is a set of AI (artificial intelligence) software. Once the production information trapped in that fish is entered, the AI will help us identify the who, what, when, where, and what is related to the fish head and perform a comprehensive investigation on the man, machine, material, method, and environment to compare which reasons have higher probabilities, and suggest them to engineers in order to summarize and compare the more possible reasons, verify the true cause, and find the countermeasure to solve the problem.

● The other is called FA, which is failure analysis; failure analysis is for failed samples. Special electronic microscopes are used, and some can even see nanoscale sizes to identify the areas with abnormal circuits and graphics. Or precision composition analysis instruments can be used to analyze its chemical compositions to PPM or even PPT levels to see whether the product contains impurities that should not be there, and then use the auxiliary AI mode analysis to identify the position of the failure, verify whether the assumed reason is correct and further create failure models with half the effort. Therefore, using EDA with FA is like police trying to capture the murderer; in the sea of people, everybody looks suspicious. The police can determine which area of range to search from the judgment of EDA to lock on to the suspect, and this is when the camera, which is FA, is faced towards the trace of the specific suspects who they want to monitor, and finally finding the criminal evidence of the real suspect. This is the basic operating principle of the entire EYES system.

However, these two systems are used sequentially. It is best to first use EDA to perform D4-1 cause analysis, then use FA to perform D4-2 to identify the root cause in order to focus easily. This is because using FA directly will result in two types of errors. The first is unable to find the hypothesis that we want to see. For example, we deduced that electrode corrosion was due to CI chlorine, but there was an error in the sampling or the preparation process, and it was not detected, and the assumption was overthrown arbitrarily. And the other is determining that it was chlorine corrosion as soon as CI chlorine was detected, where it might have just been hands that contaminated the sample. The most rigorous method is to first summarize from the EDA that all abnormal products had cleaning abnormalities during the process that contained chlorine, and then use FA for final verification. Even if there were errors during sampling or the preparation process that were not detected, the assumption will not be overthrown carelessly and will be verified again.

Let’s stress again that the thinking of no output at the source means trying to find the critical parameters of the equipment that can be controlled directly from the source (HWCQI); x is the best; after finding this type of root cause, use IoT to connect and monitor directly because the closer the single parameter is to the source, the less likely it will be affected by the interactions between more factors. And it is easier to control directly from the source.

The accumulated benefits from driving OCAP continuously are considerable; moving backward, it can be fed back to reinforce the quality control plan to avoid yield loss. Moving forwards, this cause can be used to improve and increase yield. In other words, turning every crisis into opportunities, accumulating each large and small anomaly event into expertise to continue promoting the progress cycle of corporate quality ZD until perfection.

The above is the methodology for designing the ZD quality system. Next, let’s talk about another aspect.

2. Management culture

After the design and establishment of the system are completed, it depends on the manager responsible for implementing the system. How well a company does is determined by the culture shaped by the manager’s thinking.

1. Traceability - Only transparent information can be traced, and anomalies responded to in real-time.

2. Continuous Improvement - Turn problems into improvement opportunities and seize them.

3. Internal Communication - Democratic and smooth communication channels, listen to the voices of the grassroots to avoid misdirection.

4. Listen to Customer - Listen to the voices of the customers and make customers dependent

5. Close loop control - A closed-loop control system that feeds back the problems at the output end to the input end for prevention.

6. Production oriented - All departments generate a value of existence by serving the production unit on time and delivering the quality required.

7. AI for leaving work on time - Resources are first used to construct all AI smart systems that allow employees to leave work on time.

8. Lean production - Continue to improve the ZD quality system using the “three nos” of lean production.

1) No Rule No do

2) No sure No try

3) No Lean No accept

As long as managers push themselves constantly, do not become lazy, and deviate from the principles above, after the culture is established, the company would have shaped an immune system that protects ZD.

Summary of arguments:

The profit of a company comes from two significant aspects, one is the Business strategy used to fight for external opportunities, and the second is Operation performance, which is used to lower the risk of internal management. More scores can be achieved for the first aspect. The scores must not be deducted for the second aspect.

Operation performance is the most important foundation for technology companies that mainly use expensive high-precision technology equipment for production. When two companies purchase similar machinery, how to have better competitiveness in the market depends on who has better performance, and this also means who will have lower cost and will be able to surpass the competitor and win the favor of customers because their machine equipment is the same. If there are no other differences in business strategies, the fundamental competition will be who can maximize the performance output of the operation.

Companies must not only think about beating their competitors in terms of differences in business strategies. In order for a company to surpass its competitors in cruel market competitions, the most fundamental thing is to maximize output for the equipment asset that they purchased; aim for not deducting points first before aiming for adding more points. Only this way can they stand in a winning position from the start. The use of good business strategies will make it even more powerful. It can compete boldly on a solid foundation and surpass its competitors.

It is often seen that business owners across both sides of the strait do not try to improve the interior of their companies calmly. They always only talk emptily about strategy daily and only think about winning instead of thinking about how not to lose first. This puts the cart before the horse, and its internal management will not be lean. When the economy is good, customers endure and only pick OK products and use them without seeing the problems. But when the economy goes bad, the handling of customer complaints increases significantly. They return and exchange products until they lose money and then try to hold on as long as possible by borrowing money from all over the place. The lucky ones last until the economy is good again. They see others make big money, and they want to get in on the action and listen to the beautiful visions of the salesperson, and they want to win by quantity; so they borrow money to invest, and after they made the investment, they noticed that the waste caused by the efficiency problem was even worse. The improvement steps can never keep up with the speed that opportunities disappear. When the economy overturns, the overall cost structure is even worse, and they lose even more. This is when the factory-end and the sales-end will start blaming one another; one says that the sales exaggerated the external opportunities, and the other says that the internal operational performance of the factory is poor. There are countless cases of companies that went bankrupt and shut down because of this. On the other hand, for a company that focuses on internal operational performance first, when others are suffering losses, it can still make profits; when the economy gets better, it can quickly replicate its profiting formula and expand its results. Even if the economy suddenly goes bad and profit cannot be made easily, it can use its accumulated profit for a price war, which is a great tool for kicking the competitors out of the market. If the senior manager of a company does not focus on the management of internal operations and is always dreaming about opportunities for business strategies and speaking empty words every day, it is actually very dangerous. It is shameful to turn all operational efforts and resources of the investors into pipe dreams. If you always keep your eye on the rainbow across the sky and neglect the potholes next to your feet, you will always stumble in the operation of the enterprise; you will only make a profit by luck, and you will always suffer losses.

Perfect the ZD zero-defect quality management system first, lay a solid foundation, and allow the enterprise to become an organism that can cycle and improve continuously. Then, you can cope with the ever-changing industry more calmly and stand more firmly.

RELATED

-

Chinese top-tier chipmaker HuaHong Semiconductor's net profit plummets 86 percent in the third quarter

11-17 19:11 -

Chinese MEMS provider Fatri UTC will set up its sensor chip production plant in Shanghai

11-16 18:30 -

China's packaging and testing services provider Forehope Electronic will build a new plant with RMB2.157 billion investment

11-15 17:17